- Categories:

String & binary functions (AI Functions)

AI_

Generates a response (completion) for a text prompt using a supported language model. This variant of the function enhances AI_COMPLETE with document understanding capabilities. The prompt can reference information or images found in a file containing a document. The function supports a single document input.

Syntax¶

The function has three required arguments and one optional argument. The function can be used with either positional or named argument syntax.

Using AI_COMPLETE with a single image input:

Arguments¶

modelA string specifying the model to be used. Specify one of the following models:

claude-3-7-sonnetclaude-4-opusclaude-4-sonnetclaude-haiku-4-5claude-opus-4-5claude-opus-4-6claude-opus-4-7claude-sonnet-4-5claude-sonnet-4-6gemini-3.1-prollama4-maverickllama4-scoutopenai-gpt-4.1openai-gpt-5openai-gpt-5-chatopenai-gpt-5-miniopenai-gpt-5-nanoopenai-gpt-5.1openai-gpt-5.2openai-gpt-5.4pixtral-large

Supported models might have different costs.

predicateA string prompt.

fileA FILE type object representing an image.

model_parametersAn object containing zero or more of the following options that affect the model’s hyperparameters. See LLM Settings.

-

temperature: A value from 0 to 1 (inclusive) that controls the randomness of the output of the language model. A higher temperature (for example, 0.7) results in more diverse and random output, while a lower temperature (such as 0.2) makes the output more deterministic and focused.Default: 0

-

top_p: A value from 0 to 1 (inclusive) that controls the randomness and diversity of the language model, generally used as an alternative totemperature. The difference is thattop_prestricts the set of possible tokens that the model outputs, whiletemperatureinfluences which tokens are chosen at each step.Default: 0

-

max_tokens: Sets the maximum number of output tokens in the response. Small values can result in truncated responses.Default: 4096 Maximum allowed value: 8192

-

guardrails: Filters potentially unsafe and harmful responses from a language model using Cortex Guard. EitherTRUEorFALSE. The default value isFALSE.

-

Returns¶

Returns the string response from the language model.

Examples¶

The following examples demonstrate the basic capabilities of the AI_COMPLETE function with images.

Visual question answering¶

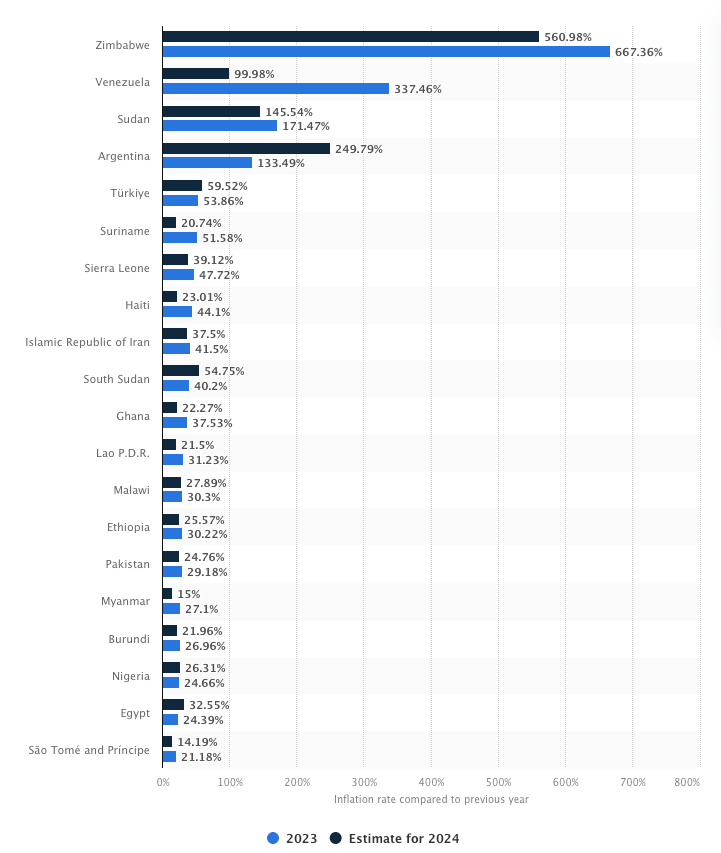

A chart of inflation rates is used to answer a question about the data.

Comparison between inflation rates in 2023 and in 2024 (Statista)

Response:

Entity extraction from an image¶

This example extracts the entities (objects) from an image and returns the results in JSON format.

Response:

Usage notes for processing images¶

-

Only text and images are supported. Video and audio files are not supported.

-

Supported image formats:

.jpg.jpeg.png.gif.webppixtralandllama4models also support.bmp.

-

The maximum image size is 10 MB for most models, and 3.75 MB for

claudemodels.claudemodels do not support images with resolutions above 8000x8000. -

The stage containing the images must have server-side encryption enabled. Client-side encrypted stages are not supported.

-

The function does not support custom network policies.

-

Stage names are case-insensitive; paths are case-sensitive.

Note

AI_COMPLETE is the updated version of COMPLETE. For the latest functionality, use AI_COMPLETE.

Legal notices¶

Refer to Snowflake AI and ML for legal notices.