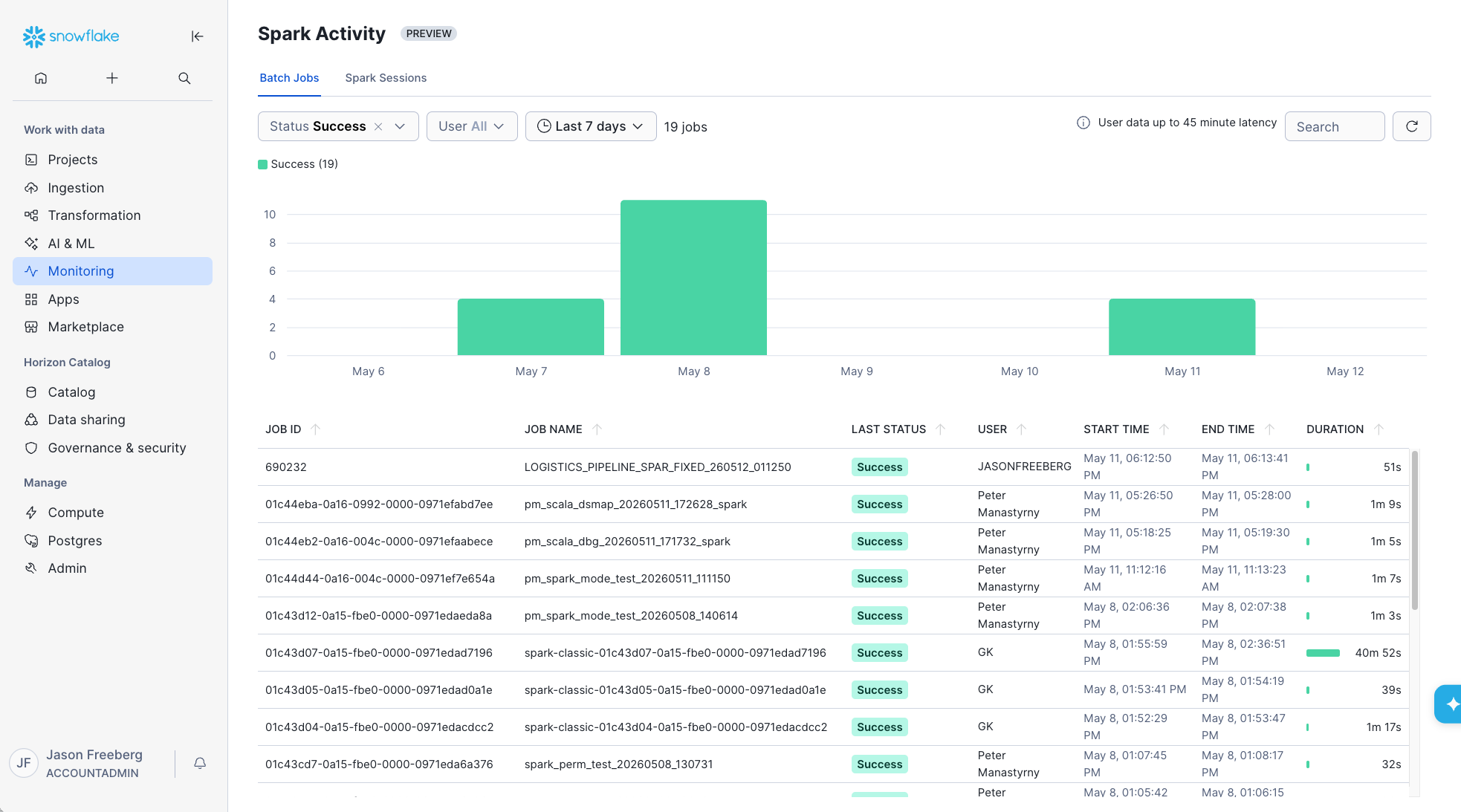

Snowpark Connect History UI¶

The Spark Activity UI provides a view of Snowpark Connect for Spark usage and history across your Snowflake account. This UI consolidates the relevant information from each Snowpark Connect for Spark job or interactive session to aid in monitoring and troubleshooting.

All jobs run through the snowpark-submit CLI appear under the Batch Jobs tab with their respective status, duration, name, and owner. Any Spark Session connection is tracked under the Spark Sessions tab with their respective queries and owner.

The following sections cover how to open the page and how each tab behaves.

Open Spark Activity¶

- Sign in to Snowsight.

- Select Monitoring » Spark Activity.

Batch Jobs Tab¶

The Batch Jobs tab lists every invocation of the snowpark-submit utility for your account. For each run you can see duration, who submitted the job, and status.

For more background on installing the CLI, command-line options, and batch patterns, see Orchestration with Snowpark Connect for Spark and the snowpark-submit command reference.

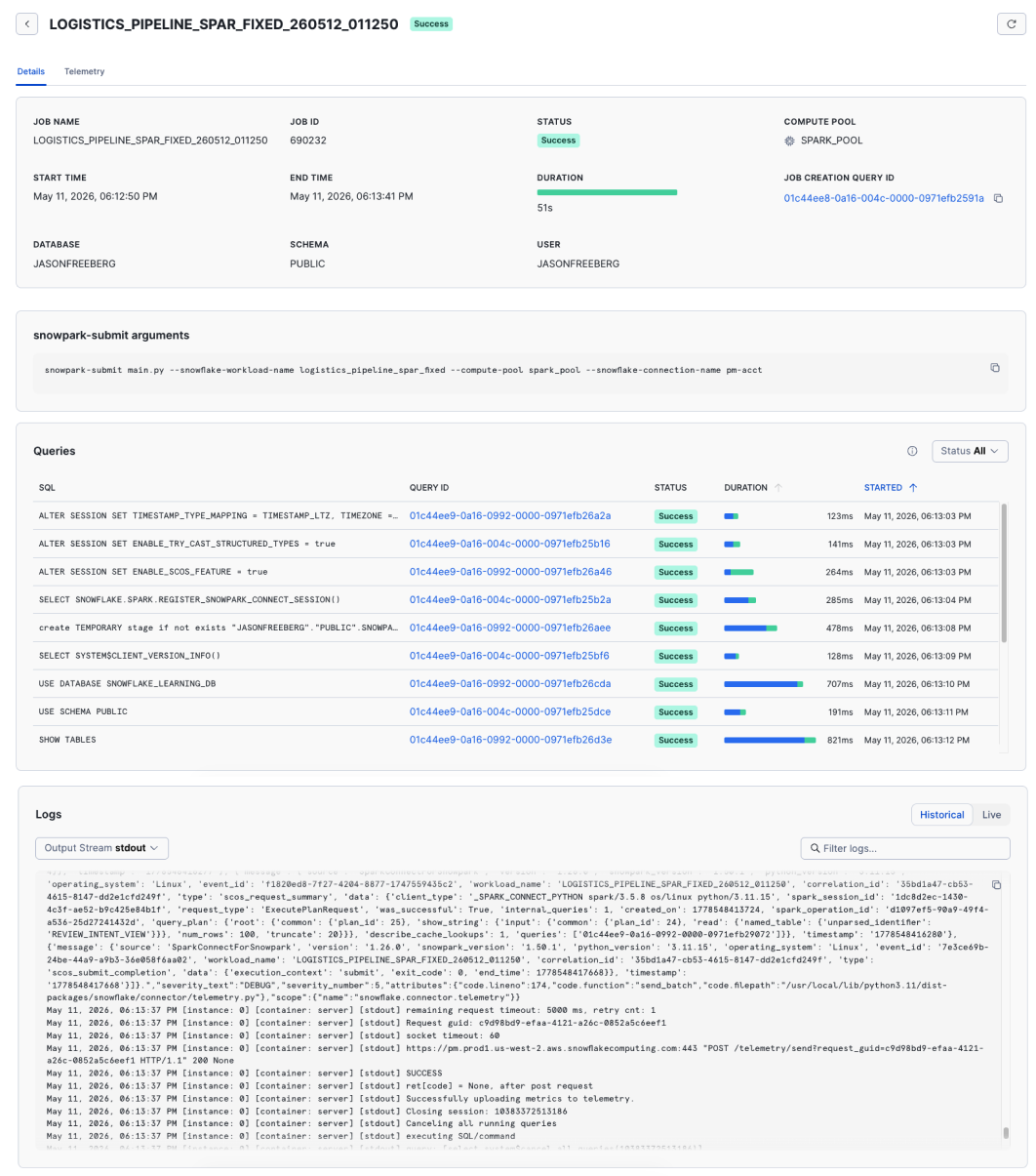

Batch Job Details Page¶

Select a row to open the details view for that run. The details include:

- The full

snowpark-submitcommand line that was executed - Queries associated with the job

- Log output and

stderrfrom the run - An OpenTelemetry trace for the job

For log and event-table behavior for submit workloads, see Monitoring Snowpark Connect for Spark workloads.

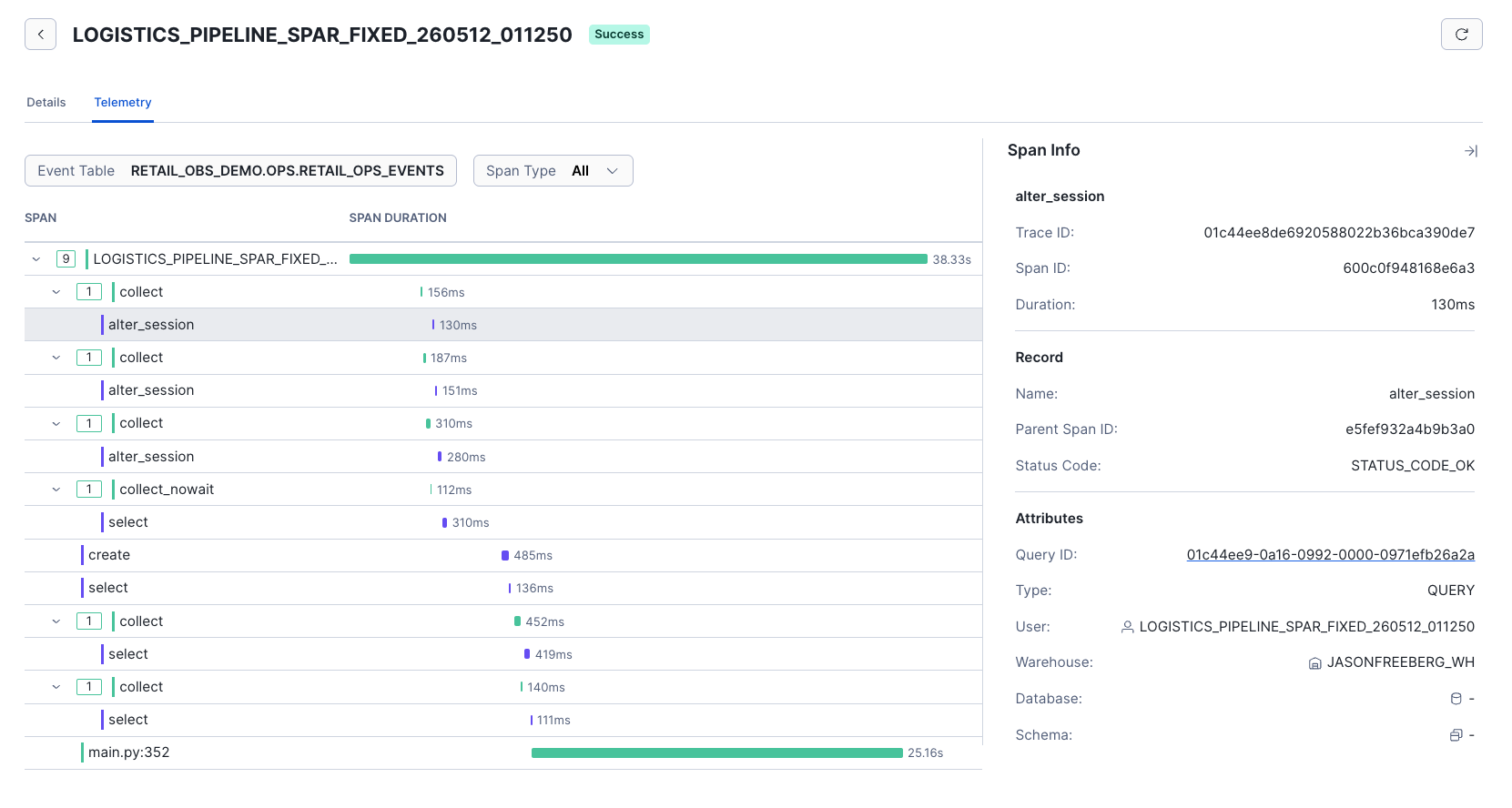

Batch Job Trace Tab¶

The Trace tab on the Details page shows an OpenTelemetry trace of the job execution. Each DataFrame action is represented by a span, and the associated queries for each of those DataFrame actions is shown as a child span. This can help you more easily visualize the portions of your job that are taking the longest to run, and more easily get to the Query Profile for any slow or failed DataFrame actions.

For how trace data is represented and how to view or query it in your event table, see Viewing trace data.

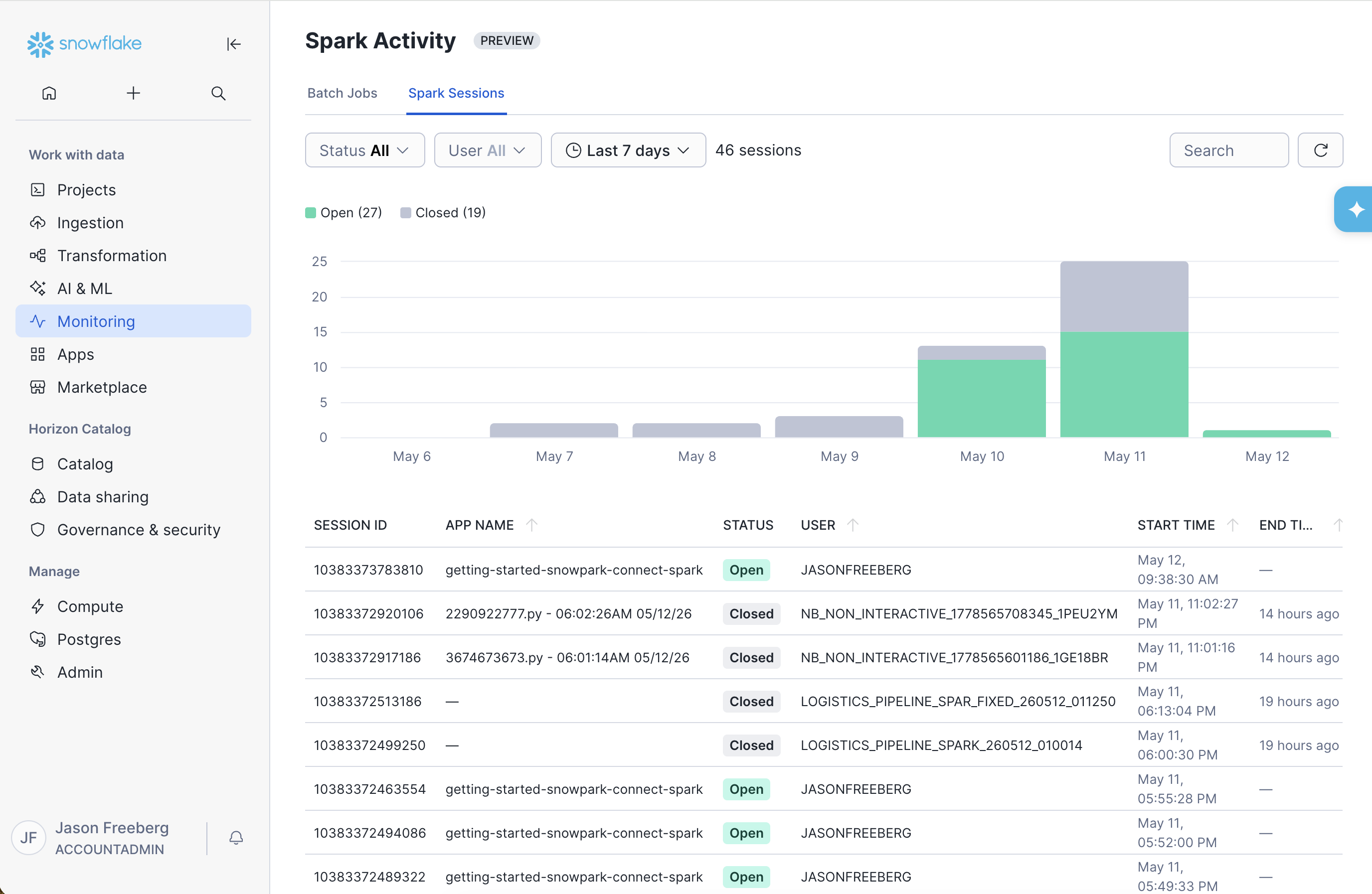

Spark Sessions Tab¶

The Spark Sessions tab lists Snowpark Connect for Spark client sessions that have connected to your account. That includes sessions from local laptops, Snowflake Notebooks, Snowflake Workspaces, and other supported clients.

For each session, Snowsight shows when the session started, who started it, the session ID, and the session name.

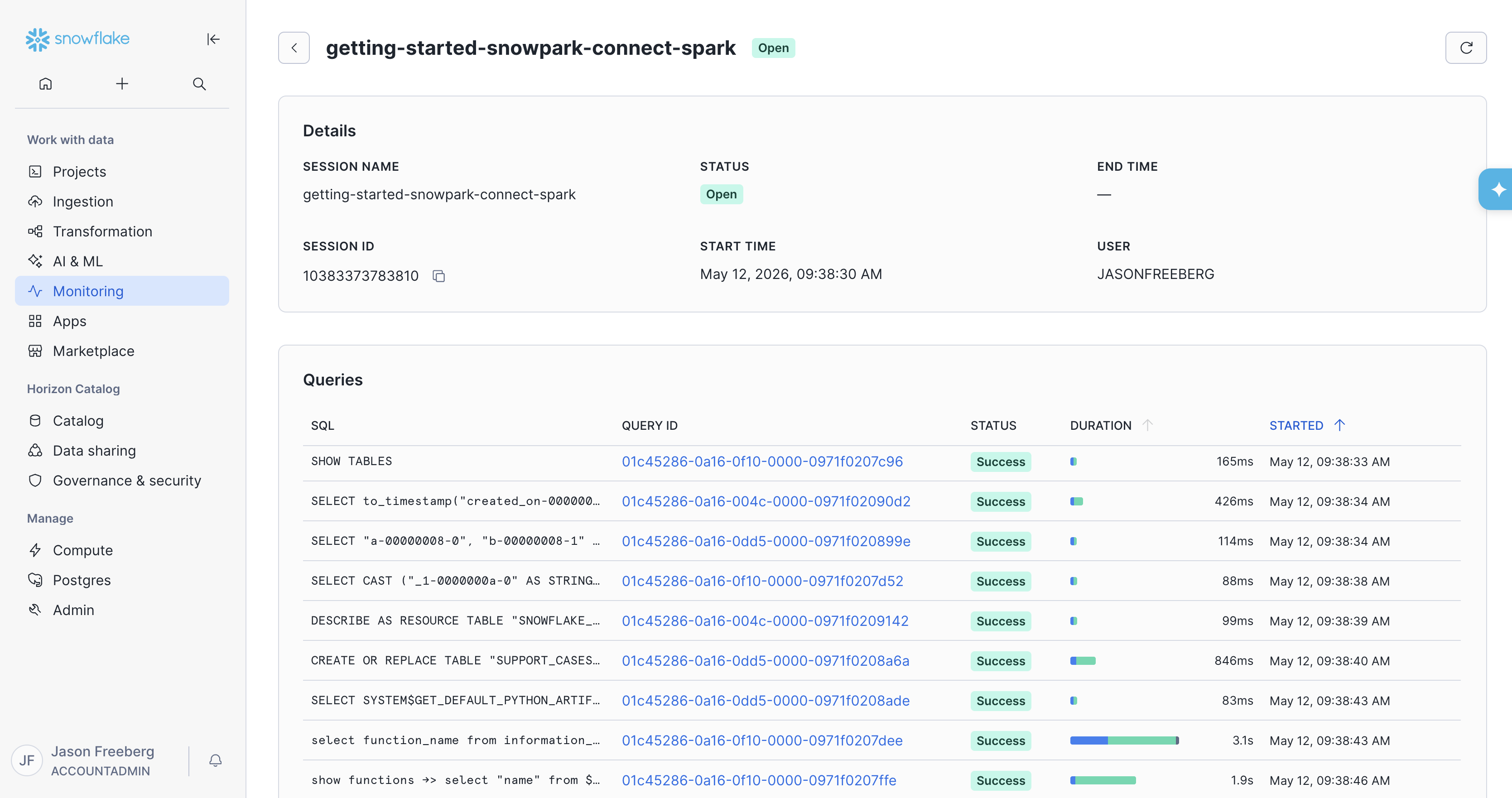

Spark Session Details Page¶

The details page for a Spark Session shows all of the Queries issued from that session. Clicking the Query ID for one of these queries will take you to the Query Profile, which you can use to debug long or failed queries.

Setting the App Name¶

The app name comes from the app_name argument when you call init_spark_session. If you set app_name, that value appears as the session name in Snowsight.

In this example, my_app_name is shown as the session name.

If you do not pass app_name, Snowpark Connect uses the file name where the session was created as the app_name (for example main.py). For how the Python API derives the default application name, see the app_name parameter on init_spark_session.

Limitations¶

- If you use Snowpark Connect in a scheduled Snowflake Notebook (

EXECUTE NOTEBOOK PROJECT), those runs are not yet shown in the Batch Jobs tab. However, the Sessions for those jobs are shown in the Spark Sessions tab. - As of May 12, 2026 it may take up to 30 minutes for the Queries card to show the queries for a Batch Job.