Using the JDBC Driver¶

This topic provides information about how to use the JDBC driver.

Snowflake JDBC API extensions¶

The Snowflake JDBC driver supports additional methods beyond the standard JDBC specification. This section documents how to use unwrapping to access the Snowflake-specific methods, then describes three of the situations in which you might need to unwrap:

- Performing an asynchronous query.

- Uploading data files directly from a stream to an internal stage.

- Downloading data files directly from an internal stage to a stream.

Unwrapping Snowflake-specific classes¶

The Snowflake JDBC driver supports Snowflake-specific methods. These methods are defined in Snowflake-specific

Java-language interfaces, such as SnowflakeConnection, SnowflakeStatement, and SnowflakeResultSet. For example,

the SnowflakeStatement interface contains a getQueryID() method that is not in the JDBC Statement interface.

When the Snowflake JDBC driver is asked to create a JDBC object (e.g. create a JDBC Statement object by

calling a Connection object’s createStatement() method), the Snowflake JDBC driver actually creates

Snowflake-specific objects that implement not only the methods of the JDBC standard, but also the additional methods

from the Snowflake interfaces.

To access these Snowflake methods, you “unwrap” an object (such as a Statement object) to expose the Snowflake

object and its methods. You can then call the additional methods.

The following code shows how to unwrap a JDBC Statement object to expose the methods of the

SnowflakeStatement interface, and then call one of those methods, in this case setParameter:

Performing an asynchronous query¶

The Snowflake JDBC Driver supports asynchronous queries, such as queries that return control to the user before they finish. You can start a query and then use polling to determine when the query has finished. When it does, the user can read the result set.

This feature allows a client program to run multiple queries in parallel without the client program itself using multi-threading.

Asynchronous queries use methods added to the SnowflakeConnection, SnowflakeStatement, SnowflakePreparedStatement, and

SnowflakeResultSet classes.

Note

To perform asynchronous queries, you must ensure the ABORT_DETACHED_QUERY configuration parameter is FALSE (default value).

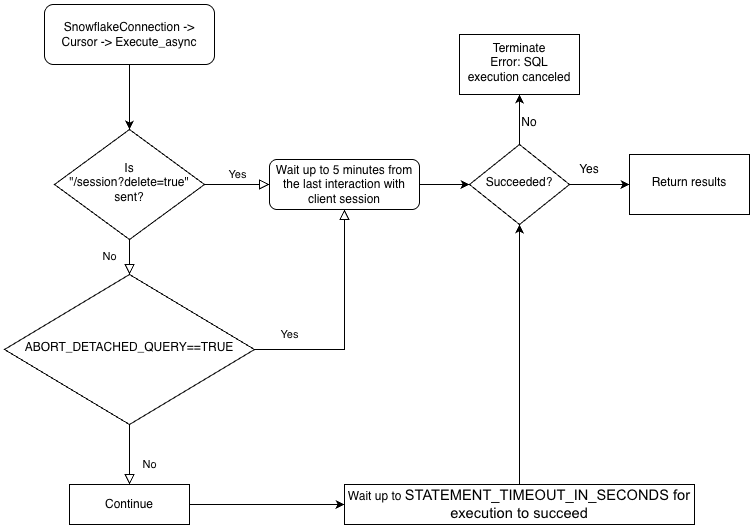

If the connection to client is lost:

- For synchronous queries, all in-progress synchronous queries are aborted immediately regardless of the parameter value.

- For asynchronous queries:

-

If ABORT_DETACHED_QUERY is set to

FALSE, in-progress asynchronous queries continue to run until they end normally. -

If ABORT_DETACHED_QUERY is set to

TRUE, Snowflake automatically aborts all in-progress asynchronous queries when a client connection is not re-established after five minutes.You can prevent the asynchronous query from being aborted at the five minute mark by calling

cursor.query_result(queryId). While this call does not retrieve the actual query result as the query is still running, it does prevent the query from being canceled. Invokingquery_resultis a synchronous operation, which might or might not be appropriate for your particular use case.

-

You can run a mix of synchronous and asynchronous queries in the same session.

Note

Asynchronous queries don’t support PUT/GET statements.

When executeAsyncQuery(query) is used, the Snowflake JDBC driver automatically keeps track of the queries submitted asynchronously. When the connection is explicitly closed with connection.close(), the list of async queries is examined and, if any of them are still running, the Snowflake-side session is not deleted.

If no async queries are running within the same connection, the Snowflake session belonging to the connection is logged out when connection.close() is called, which implicitly cancels all other queries running in the same session.

This behavior also depends on the SQL ABORT_DETACHED_QUERY parameter. For more information, see the ABORT_DETACHED_QUERY parameter documentation.

As a best practice, isolate all long-running async tasks (especially those intended to continue after the connection is closed) into a separate connection.

To better understand the hierarchy of the drivers’ business logic and the ABORT_DETACHED_QUERY parameter’s interaction, see the following flowchart:

Best practices for asynchronous queries¶

-

Ensure that you know which queries are dependent on other queries before you run any queries in parallel. Queries that are interdependent and order-sensitive are not suitable for parallelizing. For example, an INSERT statement should not start until after the corresponding CREATE TABLE statement has finished.

-

Ensure that you do not run too many queries for the memory that you have available. Running multiple queries in parallel typically consumes more memory, especially if more than one ResultSet is stored in memory at the same time.

-

When polling, handle the rare cases where a query does not succeed. For example, avoid the following potential infinite loop:

Instead, use code similar to the following:

Instead, use code similar to the following:

-

Ensure that transaction control statements (BEGIN, COMMIT, and ROLLBACK) are not executed in parallel with other statements.

Examples of asynchronous queries¶

Most of these examples require that the program import classes as shown below:

This is a very simple example:

This example stores the query ID, closes the connection, re-opens the connection, and uses the query ID to retrieve the data:

This is a very simple example:

This example stores the query ID, closes the connection, re-opens the connection, and uses the query ID to retrieve the data:

Upload data files directly from a stream to an internal stage¶

You can upload data files using the PUT command. However, sometimes it makes sense to transfer data directly from a

stream to an internal (i.e. Snowflake) stage as a file. (The stage

can be any internal stage type: table stage, user stage, or named stage. The JDBC driver does not support uploading to an external

stage.) Here is the method exposed in the SnowflakeConnection class:

Sample usage:

Sample usage:

Code written for JDBC Driver versions prior to 3.9.2 might cast SnowflakeConnectionV1 rather than

unwrap SnowflakeConnection.class. For example:

Note

Customers using newer versions of the driver should update their code to use unwrap.

Download data files directly from an internal stage to a stream¶

You can download data files using the GET command. However, sometimes it makes sense to transfer data directly from a

file in an internal (i.e. Snowflake) stage to a stream. (The stage

can be any internal stage type: table stage, user stage, or named stage. The JDBC driver does not support downloading to an

external stage.) Here is the method exposed in the SnowflakeConnection class:

Sample usage:

Sample usage:

Code written for JDBC Driver versions prior to 3.9.2 might cast SnowflakeConnectionV1 rather than

unwrap SnowflakeConnection.class. For example:

Multi-statement support¶

This section describes how to execute multiple statements in a single request using the JDBC Driver.

Note

- Executing multiple statements in a single query requires that a valid warehouse is available in a session.

- By default, Snowflake returns an error for queries issued with multiple statements to protect against SQL injection .

Executing multiple statements in a single query increases the risk of SQL injection. Snowflake recommends using it sparingly.

To reduce the SQL injection risk, use the

SnowflakeStatementclass’ssetParameter()method to specify the number of statements to be executed, which makes it more difficult to inject a statement by appending it. For more information aboutSnowflakeStatement, see Interface: `SnowflakeStatement`.

Sending multiple statements and handling results¶

Queries containing multiple statements can be executed the same way as queries with a single statement, except that the query string contains multiple statements separated by semicolons.

There are two ways to allow multiple statements:

-

Call Statement.setParameter(“MULTI_STATEMENT_COUNT”, n) to specify how many statements at a time this Statement should be allowed to execute. See below for more details.

-

Set the MULTI_STATEMENT_COUNT parameter at the session level or the account level by executing one of the following commands:

Or:

Setting the parameter to 0 allows an unlimited number of statements. Setting the parameter to 1 allows only one statement at a time.

In order to make SQL Injection attacks more difficult, users can call the setParameter method to

specify the number of statements to be executed in a single call, as shown below.

In this example, the number of statements to execute in a single call is 3:

The default number of statements is 1; in other words, multi-statement mode is off.

To execute multiple statements without specifying the exact number, pass a value of 0.

The MULTI_STATEMENT_COUNT parameter is not part of the JDBC standard; it is a Snowflake extension. This parameter affects more than one Snowflake driver/connector.

When multiple statements are executed in a single execute() call, the result of the first statement is

available through the standard getResultSet() and getUpdateCount() methods.

To access the results of the statements that follow, use the getMoreResults() method.

This method returns true when more statements are available for iteration, and false otherwise.

The example below sets the MULTI_STATEMENT_COUNT parameter, executes 3 statements, and retrieves update counts and result sets:

Snowflake recommends using execute() for multi-statement queries.

The methods executeQuery() and executeUpdate() also support multiple statements, but will throw an exception if the first result is not the expected result type (result set and update count, respectively).

Failed statements¶

If any of the SQL statements fails to compile or execute, execution is aborted. Any previous statements that ran before are unaffected.

For example, if the statements below are run as a single multi-statement query, the query will fail on the third statement, and an exception will be thrown.

If you were to then query the contents of table test, values 1 and 2 would be present.

Unsupported features¶

PUT and GET statements are not supported for multi-statement queries.

Preparing statements and using bind variables are also not supported for multi-statement queries.

Binding variables to statements¶

Binding allows a SQL statement to use a value that is stored in a Java variable.

Simple binding¶

Without binding, a SQL statement specifies values by specifying literals inside the statement. For example, the following

statement uses the literal value 42 in an UPDATE statement:

With binding, you can execute a SQL statement that uses a value that is inside a variable. For example:

The ? inside the VALUES clause specifies that the SQL statement uses the value from a variable. The setInt() method

specifies that the first question mark in the SQL statement should be replaced with the value in the variable named

my_integer_variable. Note that setInt() uses 1-based, rather than 0-based values (i.e. the first question mark is

referenced by 1, not 0).

Binding variables to timestamp columns¶

Snowflake supports three different variations for timestamps: TIMESTAMP_LTZ , TIMESTAMP_NTZ , TIMESTAMP_TZ. When you call

PreparedStatement.setTimestamp to bind a variable to a timestamp column, the JDBC Driver interprets the timestamp value in

terms of the local time zone (TIMESTAMP_LTZ) or the time zone of the Calendar object passed in as an argument:

If you want the driver to interpret the timestamp using a different variation (e.g. TIMESTAMP_NTZ), use one of the

following approaches:

-

Set the session parameter CLIENT_TIMESTAMP_TYPE_MAPPING to the variation.

Note that the parameter affects all binding operations for the current session. If you need to change the variation (e.g. back to

TIMESTAMP_LTZ), you must set this session parameter again. -

(In the JDBC Driver 3.13.3 and later versions) Call the

PreparedStatement.setObjectmethod, and use thetargetSqlTypeparameter to specify one of the following Snowflake timestamp variations:SnowflakeType.EXTRA_TYPES_TIMESTAMP_LTZSnowflakeType.EXTRA_TYPES_TIMESTAMP_TZSnowflakeType.EXTRA_TYPES_TIMESTAMP_NTZSnowflakeType.EXTRA_TYPES_VECTORSnowflakeType.EXTRA_TYPES_DECFLOATSnowflakeType.EXTRA_TYPES_YEAR_MONTH_INTERVALSnowflakeType.EXTRA_TYPES_DAY_TIME_INTERVAL

For example:

SnowflakeUtil.EXTRA_TYPES_TIMESTAMP_LTZSnowflakeUtil.EXTRA_TYPES_TIMESTAMP_TZSnowflakeUtil.EXTRA_TYPES_TIMESTAMP_NTZ

For example:

Batch inserts¶

In your Java application code, you can insert multiple rows in a single batch by binding parameters in an INSERT statement and

calling addBatch() and executeBatch().

As an example, the following code inserts two rows into a table that contains an INTEGER column and a VARCHAR column. The example

binds values to the parameters in the INSERT statement and calls addBatch() and executeBatch() to perform a batch

insert.

When you use this technique to insert a large number of values, the driver can improve performance by streaming the data (without creating files on the local machine) to a temporary stage for ingestion. The driver automatically does this when the number of values exceeds a threshold.

In addition, the current database and schema for the session must be set. If these are not set, the CREATE TEMPORARY STAGE command executed by the driver can fail with the following error:

Note

For alternative ways to load data into the Snowflake database (including bulk loading using the COPY command), see Load data into Snowflake.

Java sample program¶

For a working sample written in Java, right-click the name of the file, SnowflakeJDBCExample.java, and save the link/file to your local file system.

Troubleshooting¶

I/O error: Connection reset¶

In some cases, the JDBC Driver might fail with the following error message after a period of inactivity:

You can work around the problem by setting a specific “time to live” for the connections. If a connection is idle for longer than the “time to live”, the JDBC Driver removes the connection from the connection pool and creates a new connection.

To set the time to live, set the Java system property named net.snowflake.jdbc.ttl to the number of seconds that the

connection should live:

-

To set this property programmatically, call

System.setProperty: -

To set this property when running the

javacommand, use the-Dflag:

The default value of the net.snowflake.jdbc.ttl property is -1, which means that idle connections are not removed from

the connection pool.

Handling errors¶

When handling errors and exceptions for a JDBC application, you can use the ErrorCode.java file that Snowflake provides to determine the cause of the problems. Error codes specific to the JDBC driver start with 2, in the form: 2_NNNNN_.

Note

The link to the ErrorCode.java in the public snowflake-jdbc git repository points to the latest version of the file, which might differ from the version of the JDBC driver you currently use.