Overview of Datometry for Snowflake¶

Important

Product — Public Preview

Currently available as a RPM deployment on a Red Hat-compatible Linux VM on AWS, Azure and Google Cloud along with a valid deployment on Snowflake in North America, Western Europe, the United Kingdom and select other countries.

Not available in government and VPS deployments that require FedRAMP and other certifications.

This is a product overview of Datometry for Snowflake along with key requirements.

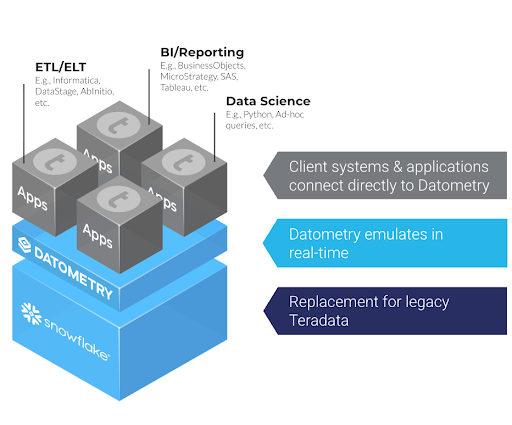

Datometry for Snowflake is a database virtualization platform that enables enterprises to transition Teradata™ workloads to Snowflake while preserving applications and long-standing investments in them. By translating SQL in real-time, Datometry allows existing applications to run natively on Snowflake with identical results, interoperability, and minimal switching costs.

1. Core Architecture¶

Datometry for Snowflake is a gateway situated between the enterprise’s existing applications (on-premises or cloud) and the Snowflake AI Data Cloud. Datometry can be deployed on premises or in a cloud environment. Datometry is distributed as a RPM for the customer to deploy and manage on a Red Hat-compatible Linux VM.

Simple Deployment¶

- Architecture: Datometry is a stateless database virtualization engine, deployed within the customer’s environment - on-premises or in-cloud.

- Scalability: Datometry scales vertically with CPU resources and horizontally across multiple VMs using a standard load balancer.

- Self-Contained: Datometry has no external dependencies; all auxiliary metadata is stored directly within Snowflake.

Supported Application Types¶

- All commonly used SQL and protocols as of Teradata 14.0 and higher are supported.

- All data warehousing applications that connected previously to Teradata via ODBC, JDBC drivers or other connectors work immediately with Datometry.

- Datometry is used for both ETL systems and BI/Reporting applications, including Informatica™, IBM DataStage™, AbInitio™, SAS™, MicroStrategy™, Cognos™, SAP Business Objects™, and Tableau™.

Supported Data Types and Formats¶

- Datometry supports all data types and formats commonly used in Teradata SQL, including data types not natively available in Snowflake.

- With Datometry, customers can use all Snowflake built-in table types as well as Iceberg tables.

Unsupported Application Types

- Datometry supports systems connecting via TCP/IP. Other types of connections such as mainframe systems are currently not supported.

- Certain workload types are not supported via Datometry today and it is recommended that a Datometry specific assessment be done to determine if such workloads or patterns exist.

2. The Platform Intelligence¶

Central to Datometry, is the XTRA (Flexible and Extensible Algebra) engine. Unlike code translation tools or transpilers, Datometry performs a deep semantic analysis of every SQL request.

- Analysis & Type Inference: Datometry analyzes the incoming Teradata SQL, resolves table/column references, and performs type inference for all variables.

- XTRA Transformation: The query is represented internally in the XTRA algebra. Datometry performs normalization and optimization specifically for Snowflake’s architecture.

- Snowflake SQL Synthesis: Datometry generates optimized Snowflake SQL to leverage Snowflake’s massively parallel processing (MPP) capabilities.

- Result Conversion: Result sets from Snowflake are converted and return to the application in the format they expect.

3. Metadata Management¶

For features where no direct mapping exists between Teradata and Snowflake (e.g., SET Tables, Global Temporary Tables), Datometry utilizes a specialized Metadata Store which stores Teradata specific properties which are called at run time for emulation of SQL and result conversion.

- Metadata Store: A collection of objects stored within a dedicated Snowflake schema.

- Functionality: It records how specific objects should be interpreted. For example, if a table is marked as a “SET Table,” Datometry enforces row uniqueness during INSERT operations, even though the underlying Snowflake table is standard.

- Synchronization: Storing metadata within Snowflake ensures that schema annotations and actual data remain perfectly synchronized.

4. Coverage and Compatibility¶

While there are no technical limits to virtualization, Datometry supports primarily commonly used SQL and APIs. In practice, Datometry provides an average of 99.5% syntax coverage for typical Teradata workloads.

- Supported Features: Case sensitivity/insensitivity, Recursion, Macros, Stored Procedures, Global Temporary Tables, and Teradata-specific NULL handling.

- Data Parity: Datometry reconciles the semantic differences between systems to deliver bit-identical results.

- Teradata Workload Assessment: To understand the Teradata workload coverage by Datometry and identify any workarounds needed, an assessment of the Teradata schema and query logs is conducted. To learn more about this critical step, contact your Snowflake representative.

5. Implementation Workflow: Repoint, Test, Transition¶

The transition to Snowflake follows a non-disruptive, phased approach:

- Analyze: Use the Datometry assessment to inspect Teradata schema and query logs and determine workload compatibility.

- Synchronize: Move initial data and schemas into Snowflake.

- Repoint: Update application connection strings (JDBC/ODBC) to the Datometry endpoint.

- Verify: Execute dual-run testing to ensure parity between Teradata and Snowflake results.

- Transition: Cut over production traffic and decommission legacy Teradata hardware.

For details on product capability, demo, product guide, and to check if you are eligible to use Datometry for your transition away from Teradata, please contact your Snowflake representatives.